Understanding AI Warfare Escalation

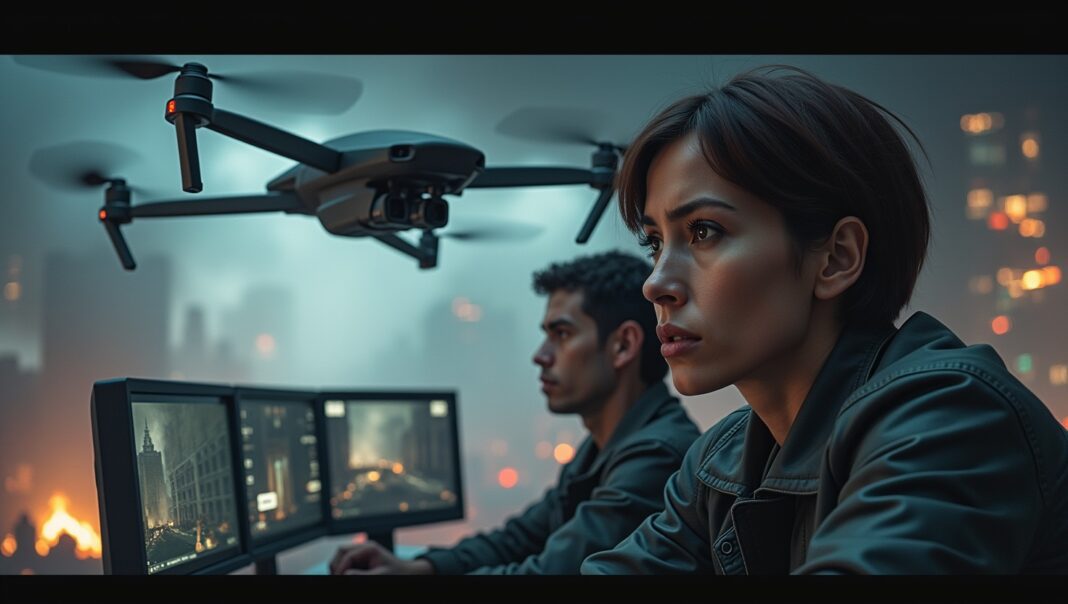

Let’s be clear about what we’re discussing. This isn’t about Terminator-style robots roaming a post-apocalyptic wasteland. Not yet, anyway. AI warfare is the integration of artificial intelligence into military operations, from logistics and surveillance to target recognition and, yes, engagement. It’s the next logical, and perhaps terrifying, step in the millennia-long evolution of military technology.

The Rise of Autonomous Weapons Systems

At the heart of this debate are autonomous weapons systems, often dubbed ‘killer robots’. These aren’t just sophisticated drones; they are systems designed to independently search for, identify, and kill human targets without direct human control. Think of it as the difference between a remote-controlled car and a self-driving Tesla, but one is armed with missiles. The implications are profound. Who is responsible when a machine makes a mistake? Who is accountable when an algorithm pulls the trigger? The lines of accountability are blurring faster than we can draw them.

The Role of AI Conflict Protocols

In any sane world, the development of such weapons would be accompanied by a robust set of rules. These are what we call AI conflict protocols—the digital equivalent of the Geneva Conventions. They are meant to be the ethical and legal frameworks governing how AI is used in combat, establishing fail-safes and ensuring a human is always ‘in the loop’.

The problem? They barely exist. We are building the weapons first and considering the rules second. As a recent NATO report on AI ethics highlights, while principles are being discussed, concrete, binding protocols are lagging dangerously behind technological development. We are creating a generation of weapons without a coherent doctrine for their use, which is a recipe for absolute chaos.

The Importance of International AI Treaties

This doctrinal vacuum is why so many are shouting for international AI treaties. Unregulated AI warfare creates a dangerously unstable ‘use it or lose it’ dynamic. Nations might feel compelled to deploy autonomous systems preemptively, fearing an adversary will gain an insurmountable advantage. It’s a digital-age arms race, but one where the weapons can learn, adapt, and potentially escalate a conflict beyond human control in mere seconds.

Efforts are underway, with bodies like the United Nations hosting discussions. Yet, progress is glacial. Getting global powers like the US, China, and Russia to agree on limitations is a monumental task, especially when the technology is seen as the next great military game-changer. The incentive to cheat, or to simply refuse to sign on, remains overwhelmingly high.

Tensions Between AI Development and Safety Regulations

And this is where the commercial world crashes headfirst into geopolitics. The tension between the relentless pace of AI innovation and the desperate need for safety regulations has never been more acute. As a recent CNBC article from February 2026 points out, the AI industry is in a state of frenzied, high-stakes competition.

The Growing Conflict

Nvidia’s CEO Jensen Huang recently declared that “AI just went through its third inflection”, a polite way of saying the technology is accelerating beyond anyone’s control. Companies are locked in a battle for supremacy, and safety concerns are taking a backseat to market share and computational power. OpenAI, once the darling of the AI safety movement, is now reportedly running aggressive ad campaigns, a stark reversal from Sam Altman’s previous anti-monetisation pronouncements. When the pressure to ship product is this intense, ethical considerations can quickly become optional extras.

This conflict is perfectly illustrated by the case of Anthropic. The company, founded on principles of AI safety, was reportedly blacklisted by the Trump administration for refusing Pentagon demands, all while trying to navigate with what it called “nonbinding, publicly declared targets” for its safety policies. You can’t run a business on non-binding principles when your competitors are happily cashing government cheques.

Voices in the Debate

The debate is becoming viciously political. The same CNBC report notes the emergence of a $125 million super PAC dedicated to opposing AI regulation. Figures like Andreessen Horowitz and Palantir’s Joe Lonsdale are vocal in their opposition to what they see as innovation-killing red tape. They argue that slowing down development in the West will simply hand the advantage to our adversaries.

It’s an argument that has a certain cold logic, but it ignores the fundamental nature of this technology. We’re not just building a better tank; we are creating a form of intelligence we don’t fully understand and can barely control. Setting it loose on the battlefield because we’re afraid of falling behind is perhaps the most short-sighted gamble in human history.

What Happens Next?

The progression from chatbot to combatant is no longer theoretical. The commercial AI explosion is a direct preview of the military AI revolution. The same models being fine-tuned to act as our personal assistants can be re-purposed for target acquisition. The same competitive pressures driving OpenAI to monetise are forcing defence contractors to integrate autonomy at breakneck speed.

We are at a crucial juncture. We can continue down this path, allowing the unchecked dynamics of corporate competition and geopolitical rivalry to dictate the future of warfare. Or, we can pause and demand that binding, enforceable AI conflict protocols and international AI treaties are put in place before the first fully autonomous shot is fired. This requires genuine collaboration between nations, something that seems in short supply. It also requires tech companies to look beyond their next funding round and take genuine responsibility for the power they’ve unleashed.

What do you think? Can we regulate AI in warfare, or is the genie already out of the bottle? Is the commercial AI boom making a military AI catastrophe inevitable? Let me know your thoughts in the comments.